It's best thought of as a "guided self-help ally," says Athena Robinson, chief clinical officer for Woebot Health, an AI-driven chatbot service. Proponents call the chatbot a 'guided self-help ally'

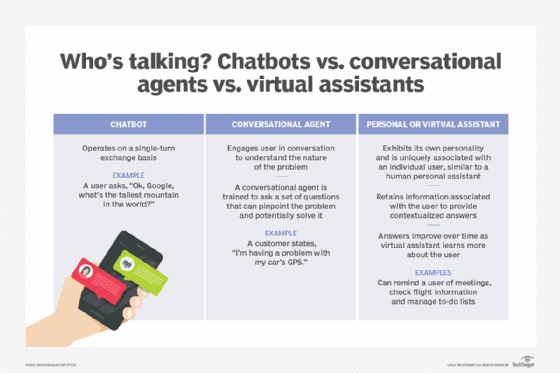

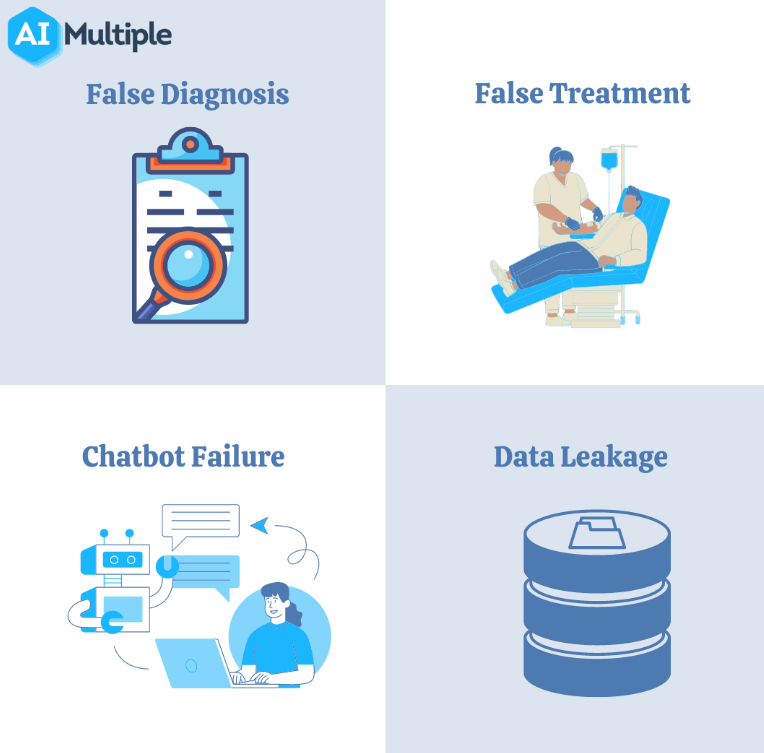

Or apps that encourage forms of journaling might boost a user's confidence by pointing when out where they make progress. Someone dealing with stress in a family relationship, for example, might benefit from a reminder to meditate. "My worry is they will turn away from other mental health interventions saying, 'Oh well, I already tried this and it didn't work,' " she says.īut proponents of chatbot therapy say the approach may also be the only realistic and affordable way to address a gaping worldwide need for more mental health care, at a time when there are simply not enough professionals to help all the people who could benefit. Tekin says there's a risk that teenagers, for example, might attempt AI-driven therapy, find it lacking, then refuse the real thing with a human being. Algorithms are still not at a point where they can mimic the complexities of human emotion, let alone emulate empathetic care, she says. "The hype and promise is way ahead of the research that shows its effectiveness," says Serife Tekin, a philosophy professor and researcher in mental health ethics at the University of Texas San Antonio. My worry is will turn away from other mental health interventions, saying, 'Oh well, I already tried this and it didn't work.' It's an area garnering lots of interest, in part because of its potential to overcome the common kinds of financial and logistical barriers to care, such as those Ali faced. Human emotions are tracked, analyzed and responded to, using machine learning that tries to monitor a patient's mood, or mimic a human therapist's interactions with a patient. Advances in artificial intelligence - such as Chat GPT- are increasingly being looked to as a way to help screen for, or support, people who dealing with isolation, or mild depression or anxiety. That is how Ali found herself on a new frontier of technology and mental health. The chatbot, which Wysa co-founder Ramakant Vempati describes as a "friendly" and "empathetic" tool, asks questions like, "How are you feeling?" or "What's bothering you?" The computer then analyzes the words and phrases in the answers to deliver supportive messages, or advice about managing chronic pain, for example, or grief - all served up from a database of responses that have been prewritten by a psychologist trained in cognitive behavioral therapy. Its chatbot-only service is free, though it also offers teletherapy services with a human for a fee ranging from $15 to $30 a week that fee is sometimes covered by insurance.

So her orthopedist suggested a mental-health app called Wysa. She had no health insurance, after having to shut down her bakery. "I felt like I was worthless because I could barely provide for my family."Īs darkness and depression engulfed Ali, help seemed out of reach she couldn't find an available therapist, nor could she get there without a car, or pay for it. "I could barely talk, I could barely move," she says, sobbing.

Ali, a single mom, supported her daughter and mother by baking recipes she learned from her beloved grandmother.īut last February, all that fell apart, after a car accident left Ali hobbled by injury, from head to knee. which specialized in the sort of custom-made ornate wedding cakes often featured in baking show competitions. Just a year ago, Chukurah Ali had fulfilled a dream of owning her own bakery - Coco's Desserts in St.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed